Fine-Tuning vs Prompt Engineering: You have a generative AI model. You want it to function better for your particular business problem. But should you fine-tune the model or refine your prompts?

This question is becoming very important to AI leaders, product teams, and enterprises that are developing custom AI solutions. If you get it wrong, you will waste time and money. If you get it right, you will be able to create a real competitive advantage.

We can consider both strategies, when to use each one, and how to make a decision for your organisation.

What Is Prompt Engineering?

Prompt engineering is the art of coming up with instructions that help an AI model to generate better results without changing the model.

One can compare it to giving clearer, more detailed instructions to the same model that everyone is using. You are not retraining anything. You're simply making smarter queries.

Key Features:

Can be used with pre-trained models (GPT-4, Claude, Gemini, etc.)

Instant outputs, no training time required

Low cost and minimal risk

Very Flexible and allows for quick iterations

Limitations that were set by the original training of the model.

Common prompt engineering techniques:

Chain-of-Thought: Solving complex problems by breaking them down into smaller steps

Few-shot prompting: Giving Examples to help the model understand

Role-based prompting: Giving Characters ("Act as an expert SEO strategist")

Structured outputs: Requesting JSON, tables, or certain formats

Constraint-based prompting: Defining Limits ("Keep it under 100 words")

Advantages of prompt engineering:

You want to accomplish something quickly.

You're experimenting with a new use case.

You are working with standard, general-purpose models.

Your domain-specific needs are not very demanding.

You have a limited Budget.

Also Read: From PoC to Product: How to Successfully Scale Generative AI Solutions

What Is Fine-Tuning?

Fine-tuning is the process of retraining a pre-trained AI model with your own data so that it recognises your specific patterns, terminology, and business logic.

It is similar to having a custom model that understands your world way better than a generic one.

Key characteristics:

Needs your own training data (hundreds to thousands of examples)

Setting up and validating takes time

More costly upfront, less expensive per query at scale

Improved performance in domain-specific tasks

Model behaviour is changed permanently

Fine-tuning approaches:

Supervised fine-tuning (SFT): Training on input-output pairs

Reinforcement Learning from Human Feedback (RLHF): Getting better based on human preferences

Parameter-efficient fine-tuning: changing only certain model layers (LoRA, QLoRA)

Continued pre-training: More training on domain-specific data before fine-tuning

When fine-tuning brings ROI:

Your domain uses specialised language or jargon.

You require outputs that are consistent and predictable.

You have a high volume of processing and need lower latency.

You possess quality proprietary data.

You desire a competitive or proprietary advantage.

Also Read: How Machine Learning Actually Works? And Why It Matters for Your Business

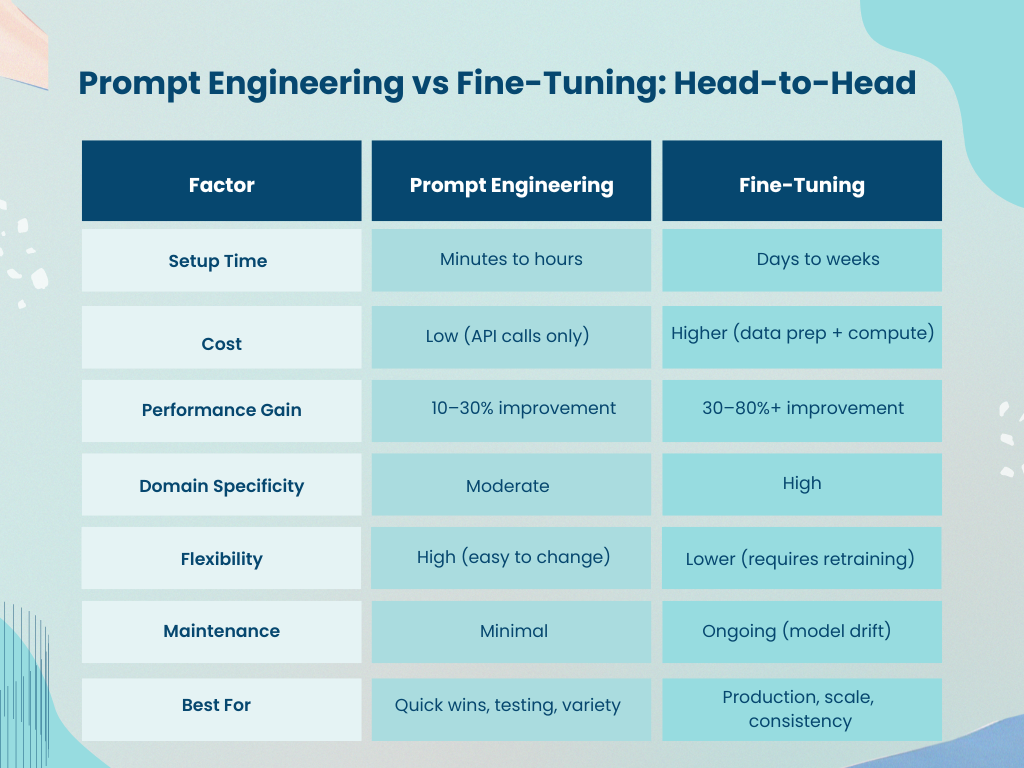

Prompt Engineering vs Fine-Tuning: Head-to-Head

How to Decide: A Framework

Start with these questions:

Do you have quality training data?

Yes → Fine-tuning is a good option

No → You can only do Prompt engineering

What's your volume and latency requirement?

If you have a low volume and can be flexible with the timing, then prompt engineering will work fine.

In the case of High volume and latency that needs to be strictly controlled, fine-tuning will be instrumental in bringing down the API calls and response time.

How specialised is your domain?

General business domain use → Prompt engineering is often enough

Highly specialised (legal, medical, technical) → Fine-tuning likely needed

What's your budget for the project?

Limited budget →Use prompt engineering First

Have a Flexible budget and focus on the long-term ROI → Think about fine-tuning

How critical is consistency?

Tolerance for variation → Prompt engineering

Need predictable, reliable outputs → Fine-tuning

Real-World Examples

Scenario 1: Customer Support Chatbot

A retail company wants an AI to answer FAQs.

Prompt engineering approach: Create a system prompt including company policies, brand voice, and escalation rules. It is quick, inexpensive and suitable for the most standard questions.

Fine-tuning approach: use more than 500+ previous customer support conversations. The model identifies the particular response patterns, the correct tone, and the situations where it needs to be escalated. It is a superior option for situations that are on the border.

Finding: The initial move should be prompt engineering. Fine-tuning is an option if the performance gaps become evident.

Scenario 2: Medical Document Summarisation

A healthcare organisation wants to use an automated patient reporting system that automatically summarises patient reports while using medical terminology.

Prompt engineering approach: Use few-shot prompts with medical examples. It might work fairly well, but subtle clinical nuances that are very important might be slightly overlooked.

Fine-tuning approach: Training was done on more than 1,000 anonymised medical reports. The Model understands the healthcare context, it keeps the necessary medical terms, and additionally it becomes familiar with the compliance requirements.

Finding: Here, Fine-tuning is absolutely necessary. The stakes are too high and domain specificity is very important.

Scenario 3: Sales Email Generation

A B2B SaaS company wants to personalise outreach emails.

Prompt engineering approach: Compose a template prompt featuring company context, product benefits, and personalisation variables. Suitable for a large number of emails and rapid iterations.

Fine-tuning approach: Involve Training on converted emails. The Model understands what works for your audience at large sales.

Finding: Here, Fine-tuning is necessary.

Hybrid approach: Employ prompt engineering for quick variations, fine-tune for continuous optimisation.

The Hybrid Approach: Best of Both Worlds

Several companies use both approach simultaneously:

Employ prompt engineering initially to confirm ideas and create quick prototypes

Generate data from your highest performing prompts

Use that data for Fine-tune to gradually create a production-ready model

Employ prompt engineering for Further optimization and Limit cases

This approach balances speed, cost, quality and performance.

Advant AI Labs is the place where we help enterprises create such workflows starting from proof-of-concept through prompt engineering to scaled deployment with fine-tuned models. Our AI/ML research and development teams are skilled in custom model fine-tuning and optimisation, ensuring that your AI delivers a competitive advantage at scale.

Prompt Engineering vs Fine-Tuning

Prompt engineering is fast, cheap, and adaptable, perfect for exploration and general-purpose tasks

Fine-tuning needs more investment, but it can lead to a substantial increase in performance of a specific area of specialised domains

Data is the main factor, if you have quality training data, it will be easier to justify the fine-tuning ROI.

Start with something simple use prompt engineering at the first, then fine-tuning as your requirements become evident.

Hybrid models are feasible employ both approach at different stages of your AI journey

Ready to Scale Your AI Strategy?

If you are merely optimizing prompts or developing custom fine-tuned models, choosing the right way still depends on your specific objectives, available data, and limitations.

We can figure out together what is best for your organisation. Advant AI Labs is your partner if you need industry-specific solutions powered by AI, including custom AI model development, fine-tuning, and generative AI solutions. We as a team work with you from proof-of-concept to full-scale deployment, to help you realise real business value from AI, and understand how fine-tuning or prompt engineering can shift your AI strategy.

FAQs

1. Is prompt engineering better than fine-tuning?

Not Quite. Prompt engineering is more efficient in terms of time and money, whereas fine-tuning provides higher performance for specialized areas. Which one to use depends on what kind of work you have, your budget, and data availability.

2. How much does fine-tuning cost?

The Cost differs and depends on the size of the model, the amount of data, and number of compute resources. Prompt engineering costs only API calls (cents to dollars per query), whereas the cost of fine-tuning is usually in the range of hundreds to thousands of dollars depending on scale and complexity.

3. Can I use prompt engineering instead of fine-tuning?

For the majority of use cases, the answer is yes. Prompt engineering is a good solution to work on general tasks, create quick prototypes, and do exploratory work. Nevertheless, for domain-specific, high-volume, or mission-critical applications, fine-tuning is the one that can delivers better results.

4. How much training data do I need to fine-tune a model?

The ideal dataset size depends largely on the complexity of the task. As a general rule, more data helps, but quality always matters more than quantity.

5. Should we fine-tune or prompt-engineer our LLM?

Begin with prompt engineering to quickly test and validate your use case. As real-world feedback reveals recurring gaps or the need for deeper domain accuracy, move toward fine-tuning. Most mature AI teams ultimately leverage a mix of both.